Who Wins When Agents Come?

Autonomous AI systems are rewriting the economic patterns that organized modern society. They do it faster and messier than anyone could predict.

We have been in a state of informational “AI tsunami” ever since ChatGPT arrived in late 2022. Dozens, maybe hundreds of generative tools exploded onto the market in just a couple of years — for text, images, audio, video, code, and even documents like PowerPoint presentations and all sorts of design items. I remember the FOMO of 2023 and 2024 vividly: you simply could not keep up with the headlines.

But the entire paradigm was essentially a copilot.

You chat with a tool, it makes things faster, you get more done (or not). Real limitations were everywhere. Presentation generators were not good enough. Code copilots were messy to the point where extracting value from them was itself a job. The trend was clear — people with AI could arguably do things better than people without it — but it felt incremental. An upgrade, not a transformation.

Then, in January 2026, I tried Claude’s tools, based on the Opus 4.6 model, and some agentic features. And after playing with it for a week or so, I realized things genuinely changed. And how fast, if you think about it!

It was no longer about chatting with AI. It was a full-scale workflow — files, deliverables, projects — and on top of that, you could create agents to put it on autopilot. They could work with your browser, your file system, your email, Notion, external tools, etc., etc. And the hallucination rate, in my experience, had dropped visibly, to the point where the work was actually getting done in a productive manner.

Even Gary Marcus, a vocal critic of LLMs and things like ChatGPT, published an unusually optimistic piece on the neurosymbolic nature of Claude systems and how it changes everything: The biggest advance in AI since the LLM

I kept discussing this with peers — software developers, content creators, media professionals, and finance people. Everyone is excited and surprised, but above the excitement, people feel concerns about how good AI automation has suddenly become. In the early ChatGPT era, people talked about opportunities to make their jobs easier. Now the tone has clearly shifted to “how do I not lose my job in the first place?”

This essay is about what happens when that shift plays out across the economy.

How to Run a Drug Discovery Project for ‘the Price of Lunch’

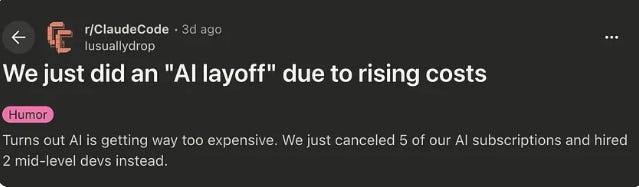

I recently came across a preprint out of Stanford, from Dr. James Zou's lab, and it's one of those papers that makes you stop and stare.

The team ran a complete drug target evaluation with eleven AI agents organized to act autonomously like an entire biotech company — virtual Chief Scientific Officer (CSO) at the top, specialist divisions below, over a hundred connectors to real biomedical databases, and a reviewer agent that checks reasoning. Agents coordinate all work between themselves, automatically and to a degree — recursively.

The CSO agent receives a query, decomposes it into sub-tasks, and delegates to specialist agents. The specialist agents use their tools, produce outputs, and pass them back. The reviewer agent checks reasoning. The CSO synthesizes and communicates the result.

The kind of analysis that, inside a pharmaceutical company, would typically involve multiple specialist teams coordinating over weeks or months.

One of the cases: the system evaluated B7-H3 as a therapeutic target in lung cancer. It found — on its own — that B7-H3 upregulation was concentrated in cancer-associated fibroblasts, not the cancer cells themselves, and that these fibroblasts were running an immunosuppressive program. It validated this with spatial transcriptomics. It recommended an antibody-drug conjugate.

For context, its training data stopped in January 2025. In August 2025, the FDA granted Breakthrough Therapy Designation to exactly that kind of drug, with a 48% response rate. The system arrived at the same logic independently.

For $46 in Anthropic API credits, in less than a day.

In another case, it autopsied a failed clinical trial and concluded the biology was probably right but the wrong patients were enrolled. That cost $54.

In the largest demonstration, the system spun up over 37,000 agents in parallel to curate outcome data from nearly 56,000 clinical trials — and found that drugs targeting cell-type-specific genes were 40% more likely to advance from Phase I to Phase II, with roughly a third fewer adverse events.

These are not chatbot conversations. This is the actual analytical work, including hypothesis generation, evidence synthesis, and cross-disciplinary reasoning, that scientists spend years learning to do.

But here is the bigger thing. The $46 did not simply replace one scientist or a specific function. It replaced the need for the whole arrangement — the teams, the handoffs, the reviews, the months of coordination. The work got done, and the organizational structure that existed to support the work became unnecessary in this particular case.

Yes, sure, it is just a proof-of-concept case study, a lab experiment, real-world deployment would be way more expensive and complex, and perhaps it will still require humans-in-the-loop. But this is a hint of where things are going, quite rapidly.

The coordination tax

Until recently, the mainstream framing was that AI replaces tasks. That is true of course, but with the rapid emergence of agentic AI, what it actually impacts is something I think of as the coordination tax — and it operates at every level of knowledge work, not just at the management level.

You see, every knowledge worker is a coordination node, if you will. The drug discovery scientist synthesizes biological and chemical data, clinical literature, and cell biology into a target assessment. The marketing analyst pulls customer insights, campaign metrics, and competitive data into a recommendation. The compliance associate reads contracts against regulatory checklists. A software developer is building a module in the enterprise software, against the tech requirements and specific context of a bigger system. Even though they are not “managers” per se, all of them are involved in pulling disparate information together, translating it across contexts, and producing something coherent. That is coordination. And the overhead of maintaining a network of humans doing it — the meetings, the reporting, the institutional memory, the sheer effort of keeping everything connected — is the coordination tax, or an operations overhead, or whatever you call it. It is often huge. Administrative expenses account for nearly 25% of health care spending in the US, for example.

For decades, the coordination tax was unavoidable. You could not get specialized knowledge synthesized without humans synthesizing it. Agentic AI threatens, for the first time in the history of human labor, to change that dynamic. It goes far beyond just helping workers do their specific functions faster. After all, we have had productivity tools since the spreadsheet. But now agents promise to not only do functions, but also to do coordination between functions automatically, at every node, without a human in the loop. Or, rather, with substantially fewer humans in the loop.

Goldman Sachs grasped this early. They embedded Anthropic engineers for six months to build agents for trade accounting and client onboarding. The CIO Marco Argenti described the agents as "digital co-workers" for complex, process-intensive professions.

Note the word: professions.

Not just functions. The actual professions — the people who do compliance, who do accounting, and client relations. Meanwhile, the CEO David Solomon announced a multiyear plan to reorganize the bank around generative AI, with a stated goal of “constrain headcount growth.”

But here is where the story gets more interesting than the usual “AI takes jobs” headline.

Mastercard launched what they call a Virtual C-Suite — an AI agent that acts as a digital CFO for small businesses, analyzing cash flow, flagging risks, and recommending actions based on 175 billion annual transactions. Most small businesses never had anyone doing this work. They couldn't afford a CFO, so financial strategy was whatever the owner could figure out between everything else. Now it is a subscription. For millions of small businesses, agents could be delivering expertise that simply didn't exist before — not replacing a human, but filling a gap no human ever occupied.

In healthcare, both sides of the tension are visible simultaneously.

A multi-agent system for rare disease diagnosis published in Nature, DeepRare, was reported to outperform experienced physicians across 6,401 cases. For the 300 million people enduring diagnostic odysseys averaging five years, that is transformative. In a broader context, administrative costs eat up a quarter of US healthcare spending.

At the same time, only 3% of healthcare organizations have deployed agents in live clinical workflows, according to one study.

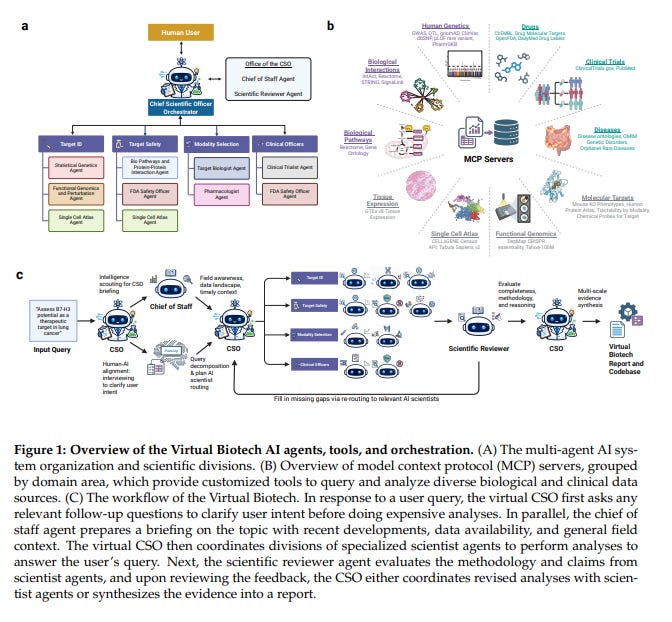

But whatever it is, the AI capability is accelerating, no question about it. At Shanghai Jiao Tong University, for instance, ASI-Evolve — a system that reads papers, forms hypotheses, designs experiments, runs them, and uses the results to improve its own architecture — produced 105 AI architectures that beat the best human-designed AI.

Yes, you heard it right, AI making AI better. My brain pictures scenes from the “I, Robot“ movie, which I watched for the first time like a decade ago, and now it seems like at least part of it is becoming reality (progress in robotics/drones + AI + agentic systems).

Anyway, then they pointed ASI-Evolve at drug-target prediction, and it arguably outperformed human experts (this claim should be treated with caution, and in the context, though, I would treat it as a theoretical proof of concept, not a deployment-grade thing. But the trend is there…).

“Looking ahead, the scope of AI self-acceleration extends beyond individual models to the full AI development stack—architecture, data, algorithms, and infrastructure yet to be explored. As agentic systems take on more of the 14 References SII-GAIR implementation and iteration work, human scientists can shift from being the executors of solutions to the definers of problems—concentrating their expertise on the questions that matter most and leaving the expansive search through hypothesis spaces to AI. We expect this paradigm to drive not only the self-improvement of individual models, but the self-evolution of the entire AI field,” the authors conclude.

The recursive loop is gradually closing.

The labor displacement could already be real

“Entry-level jobs will be replaced by AI systems,” Anthropic CEO Dario Amodei told Fox News. “We may indeed have a serious employment crisis on our hands.”

In healthcare, the agent is already arriving with a name and a face. Hippocratic AI built “Ana” — an autonomous AI agent that calls patients, prepares them for appointments, answers medical questions, and operates around the clock in multiple languages. The company initially marketed it at $9 an hour, compared with $40 for a registered nurse. Hundreds of US hospitals now use AI systems that go beyond monitoring vitals — they trigger step-by-step care action plans, directing what nurses should do before the nurse has evaluated the patient.

Michelle Mahon of National Nurses United put it this way: “The entire ecosystem is designed to automate, de-skill, and ultimately replace caregivers.”

Meanwhile, only 3% of healthcare organizations have deployed agents in live clinical workflows. The capability is racing ahead of the institutional readiness to use it safely — and the people whose judgment built the profession’s trust are being told the machine knows better…

While I am not a big fan of alarmist predictions, pretty much every report I have reviewed during the literature search for this article tells one thing: the job market is changing rapidly.

However, the displacement picture is more nuanced than either the hype or the panic suggests. BCG's microeconomic modeling estimates that over the next two to three years, 50% to 55% of US jobs will be reshaped by AI — but reshaping is not replacing.

Only 10% to 15% of jobs face actual elimination in a five-year horizon.

The more interesting finding is where the impact concentrates. Jobs with 40% or more automatable tasks, about 43% of all US roles, are the ones that trigger organizational redesign.

And the pattern is not uniform: call center representatives get substituted because their demand is bounded, while software engineers get amplified because lower costs unlock more demand for what they produce. The most structurally dangerous category is what BCG calls "divergent" roles (about 12% of jobs), where entry-level positions get automated while senior roles persist or grow.

Insurance sales assistants disappear, while senior advisory roles expand. IT support technicians handling routine tickets disappear while systems oversight roles grow… etc.

The career ladder doesn't just lose rungs at the bottom, it loses the bottom entirely. And the skills required for the remaining roles are higher, not lower — more judgment, more oversight, more cognitive intensity.

The people who thrive will be those who can grade the agents' work, not those who compete with it.

Now, here is what I find genuinely alarming about job market prospects: CEOs are not waiting for the agents and infrastructure to be ready. They are not waiting for the governance to be built. They are not even waiting for agents to be reliable. The displacement does not require AI and agents to be perfect — all it needs is that decision-makers believe they are good enough. I think with models like Opus 4.6 and beyond, Claude Cowork/Claude Code, and all sorts of other tools, that bar has been effectively cleared.

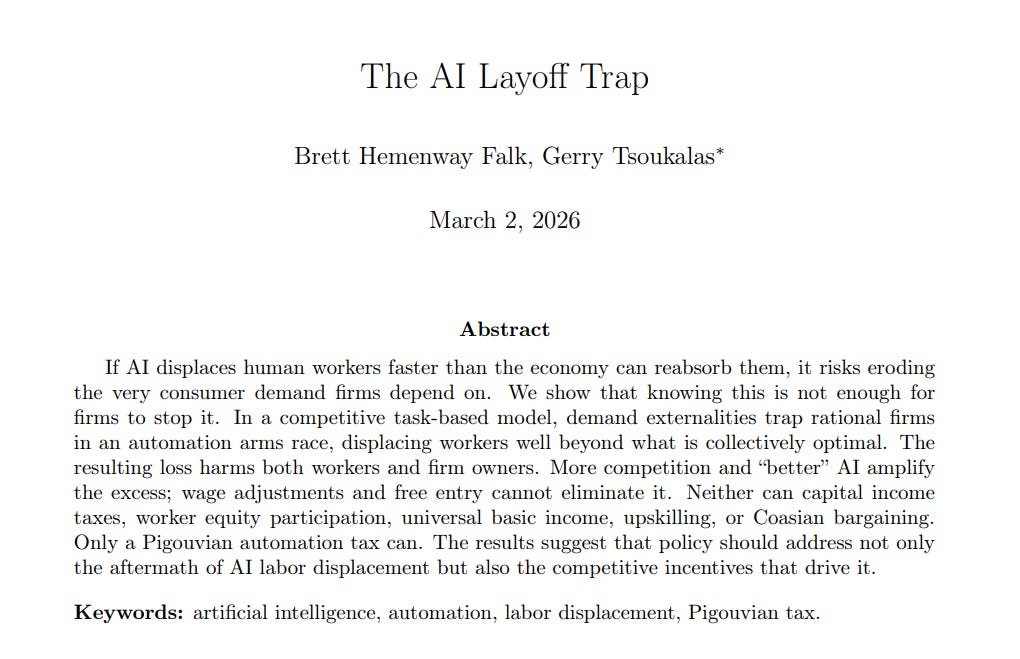

A game theory paper from UPenn and Boston University formalizes what this means at scale. AI-driven automation, they show, is a Prisoner’s Dilemma operating across the entire economy. Every company that automates gains a cost advantage. Every company that does not — gets outrun by competitors that did. But every displaced worker is also a consumer who stops buying. The rational choice for each individual CEO is to automate, but it becomes collectively suicidal when everyone makes it simultaneously.

The researchers tested UBI and profit taxes — neither resolves the coordination failure in their model. The only mechanism that could work is a Pigouvian automation tax that prices the downstream damage into the automation decision itself.

Furthermore, I think the dilemma does not stop at the level of firms, it probably works between nations, too.

In Europe, the EU AI Act is the most ambitious regulatory attempt in the world, and as a result, the continent’s AI companies continue to accept risks of falling behind American and Asian competitors (especially China), with talent migrating to less restrictive jurisdictions. China is running the opposite experiment: Tencent is wiring agents into WeChat for hundreds of millions of users, government-backed infrastructure at a scale that is hard to match even by Western democracies, especially considering the governance model in China arguably leans toward state capacity over individual worker protection. In the US, activists stalled $98 billion in data center projects in a single quarter while the administration pushes AI as geopolitical necessity. In just one example of many, Muscogee Nation citizens blocked a hyperscale data center on tribal land purchased for agriculture, a representative saying: “This feels like a modern-day land run.”

Every jurisdiction is making the same choice under different constraints:

1) Accelerate and absorb the social cost.

2) Regulate and absorb the competitive cost.

3) Or find a third path that nobody has demonstrated works.

The Luddite fallacy — that technology always creates more jobs than it destroys — was true in the long run, but the long run could be 60 to 80 years, via painful disruptions. Also, previous technologies augmented workers at their coordination nodes, where various functions still needed an operator. This time, with AI agents, things are different: agents are the autonomous operators. Will the Luddite fallacy hold?

Flying blind

But out of all the contradictions that we’ve just reviewed, the most ironic thing is that agents, with all the mind-blowing capabilities, are still half-baked for massive public and corporate use at scale...

A practitioner, Mark Kropf, founder & CEO of Rensei (enterprise OS for AI agent fleets), running autonomous coding agents in production, described 19 releases in 14 days, none of which made agents “smarter”. All made the system less likely to catch fire.

His real incidents: agents in infinite retry loops burning $200 an hour, workers crashing mid-task with no reassignment, agents editing each other’s files in a monorepo, one agent’s API calls rate-limiting the entire fleet. His prediction: the AI agent hype is going to hit a wall in 2026, because nobody solves infrastructure issues.

Neha Deodhar from Google wrote a great technical summary of why agents are still breaking in production, unlike “classical“ software.

Challenges with agents are as deep as their capabilities are impressive.

For instance, 82% of business leaders in a recent Celonis survey believe AI will fail to deliver ROI if it does not understand how the business actually runs. The context that makes the work legible disappears with the people who carried it, being substituted by agentic-driven automation.

The knowledge base agents consume is itself corruptible.

Researchers recently invented a fake disease, bixonimania, published preprints with fictional authors, thanked “Professor Sideshow Bob” in the acknowledgements, and wrote “this entire paper is made up” in the methods.

Four major AI platforms recommended ophthalmology visits for a condition that does not exist. Then real researchers cited the fake papers in a real journal. The AI treats formatting as credibility. The humans never read what they cited. Both systems, algorithmic and institutional, seem to reward volume over verification…

And when AI is used not just to consume knowledge but to substitute for it, the results are worse.

The FDA recently issued a warning letter to a pharmaceutical manufacturer that had used AI to create its drug specifications, manufacturing procedures, and production records.

When investigators asked why the company had not performed required process validation, officials replied that they “were not aware” of the legal requirement because AI had not informed them.

The facility had insects, dirt, and an open dock door exposing the manufacturing area to the outside. A company making real drugs, sold to real people, that outsourced its understanding of its own regulatory obligations to a chatbot. Nobody checked whether the outputs were accurate or even legal. An attorney who reviewed the case put it simply: no one at the company knew enough to recognize what AI was telling them was wrong.

Next, agentic AI cybersecurity is the wild west…

McKinsey’s Lilli AI platform was breached by ethical hackers who gained full access to 100,000 documents used by 30,000 consultants. Over 1,800 OpenClaw instances were found leaking API keys and credentials.

Then there is the pricing question.

It is known that the current wave of AI and agent adoption runs on subsidized compute — AI labs pricing tokens below cost to capture market share.

Users are already reporting that a single message on some platforms consumes a meaningful percentage of their plan allocation. As the honeymoon ends and consumption-based pricing takes hold, the startups built on cheap inference may discover their unit economics don’t survive.

The enterprises that restructured around agents may find the cost merely shifted from salaries to compute bills. Who bears that cost, and who profits from it, is a power question the industry has barely begun to ask…

Finally, there is one limitation of modern AI that no infrastructure or pricing model can fix, at least now.

Liang Chang ran what he calls a “Time Machine” experiment — sending today’s best AI models back to 2012 to make one of the biggest strategic calls in modern oncology. Two companies were racing to bring cancer immunotherapy to market. Bristol-Myers Squibb had a two-year head start. Both faced the same fork: run a broad trial enrolling all patients, or restrict enrollment to the subset most likely to respond based on a promising but unproven biomarker. BMS chose broad. Merck chose narrow. The broad trial failed. The narrow one succeeded so overwhelmingly it was stopped early. By 2024: Merck's drug generating $29.5 billion a year. BMS's: $9.3 billion.

Chang assembled AI agent teams and gave them only the information available before June 2012. Both teams recommended the broad path, unanimously. The path that lost. The competitive intelligence agent identified the exact scenario that materialized — and still voted broad.

I should be honest about a critique I have heard from several people in the industry: the models were not truly time-locked. They were trained on post-2012 text discussing how the story unfolded. But that actually makes the point stronger. Even with hindsight baked into its training data, the AI converged on the defensible, consensus choice. It could not make the contrarian bet that won.

“AI can give you the best possible analysis. It can’t give you the courage to go against it,” says Chang.

Organizations might be handing strategic authority to consensus machines while letting go of the people whose judgment — imperfect, intuitive, sometimes irrational — was the only thing that could override consensus when consensus was wrong. That is not a bug to be patched, it is the nature of the AI systems.

What’s ahead?

Reid Hoffman's Superagency argues that AI is the ultimate human amplifier — not a replacer but an extension of human capability, a superpower distributed to millions simultaneously.

It is a compelling and optimistic thesis, I’d like to believe it.

However, the question this essay raises is whether the institutions, the incentive structures, and the career pipelines that turn amplified individuals into a functioning economy can survive the transition.

After all, the "amplifier" framing assumes the benefits distribute. The game theory we discussed above, however, shows they concentrate.

Hoffman himself acknowledges this — he cites Ted Chiang's critique that "the economic value created by the personal computer and the internet has mostly served to increase the wealth of the top one percent of the top one percent" and says the case for AI rests on breaking that pattern.

My own opinion about the promise of an agentic future is still evolving. However, what concerns me is that the emerging agent economy seems to be running on a resource it is actively destroying.

Every institution deploying agents depends on human judgment to direct them, evaluate their output, and override them when they are wrong. That judgment was built over years inside the coordination layer — the junior roles, apprenticeship, the institutional memory, the domain knowledge accumulated through experience. The same coordination layer that agents are arguably starting to eliminate at scale.

We are simultaneously increasing the demand for human judgment and dismantling the system that develops it, which I think is the central paradox of the agent economy.

And it belongs to everyone — the CEO deciding how many people to keep, the government choosing how to regulate, the founder deciding what to build, and the twenty-year-old student wondering whether the career she is studying for will even exist by the time she is ready for it…

The twentieth century built its prosperity on a sort of bargain: invest in human talent and coordination, and the growth compounds. The agent economy breaks that bargain, at a scale unknown to history.

What it builds in its place might be the defining question of the next decade…

Thanks for reading!

— Andrii